Quick Answer: Agentic contract AI autonomously executes multi-step contract workflows — drafting, reviewing, routing, and negotiating — without requiring human prompts at each stage. Prompt-based contract AI responds to individual queries but cannot initiate or complete complex processes on its own. For enterprise contract management, the gap in productivity, accuracy, and scalability between the two approaches is substantial.

Key Takeaways

- Prompt-based AI assists; agentic AI acts. The distinction is not cosmetic — it determines whether AI reduces individual task effort or transforms entire contract operations.

- Agentic CLM systems can compress contract cycle times by up to 80%, reducing processing from weeks to days without increasing headcount.

- Enterprise-scale contract management requires multi-agent orchestration, not single-model prompting — because real contract workflows span legal, procurement, finance, and compliance simultaneously.

- Governance and auditability are non-negotiable for regulated industries. Agentic systems purpose-built for enterprise CLM embed audit trails and human-in-the-loop controls by design; prompt-based tools do not.

- The CLM market is at an inflection point. According to Gartner, organizations that deploy AI-native CLM platforms see measurably better contract risk outcomes than those layering AI onto legacy systems.

What Is Prompt-Based Contract AI?

Prompt-based contract AI is a system where a human user asks a question or issues an instruction, and the AI responds with an answer or draft — then stops. The interaction is transactional and single-turn.

In practice, this looks like: uploading a contract and typing "summarize the key obligations" or "flag any indemnification clauses." The AI delivers a response, and the workflow returns to the human. Each subsequent action requires a new prompt.

Prompt-based tools — including general-purpose large language models applied to contract tasks — have genuine utility. They can accelerate specific micro-tasks: spotting a non-standard clause, generating a first draft from a template, or explaining legal terminology to a non-lawyer. According to McKinsey's 2024 State of AI report, legal and contract review ranks among the highest-value generative AI use cases for knowledge workers.

But prompt-based AI has a hard ceiling.

The Core Limitations of Prompt-Based Contract AI

- No memory across interactions. Each prompt is stateless. The AI does not retain context from prior sessions or know the history of a specific contract relationship.

- No workflow execution. The AI can describe what should happen next but cannot trigger it — routing for approval, notifying a stakeholder, or updating a contract repository requires human action.

- No cross-system integration. Prompt-based tools cannot read your ERP, check your supplier database, or push a signed document into your CLM system without custom engineering.

- No escalation logic. When a clause falls outside standard parameters, the AI cannot identify it as a negotiation priority or automatically loop in legal counsel.

- Inconsistency at scale. Results depend heavily on prompt quality. Enterprise teams cannot standardize outcomes when every interaction depends on how an individual frames their query.

These limitations are not bugs — they are fundamental to how prompt-response architectures work. They reflect a design philosophy built for individual productivity, not organizational operations.

What Is Agentic AI for Contracting?

Agentic AI for contracting autonomously plans, executes, and manages multi-step contract workflows using a coordinated network of specialized AI agents — without requiring a human prompt at each step.

The agent receives a goal or trigger (a new contract request, an uploaded NDA, a renewal milestone), then independently determines the sequence of actions required, executes them across integrated systems, and surfaces results or escalations to the appropriate human at the appropriate time.

This is a different paradigm entirely. The human defines policies and oversight boundaries; the AI executes within them.

How Agentic Contract AI Works in Practice

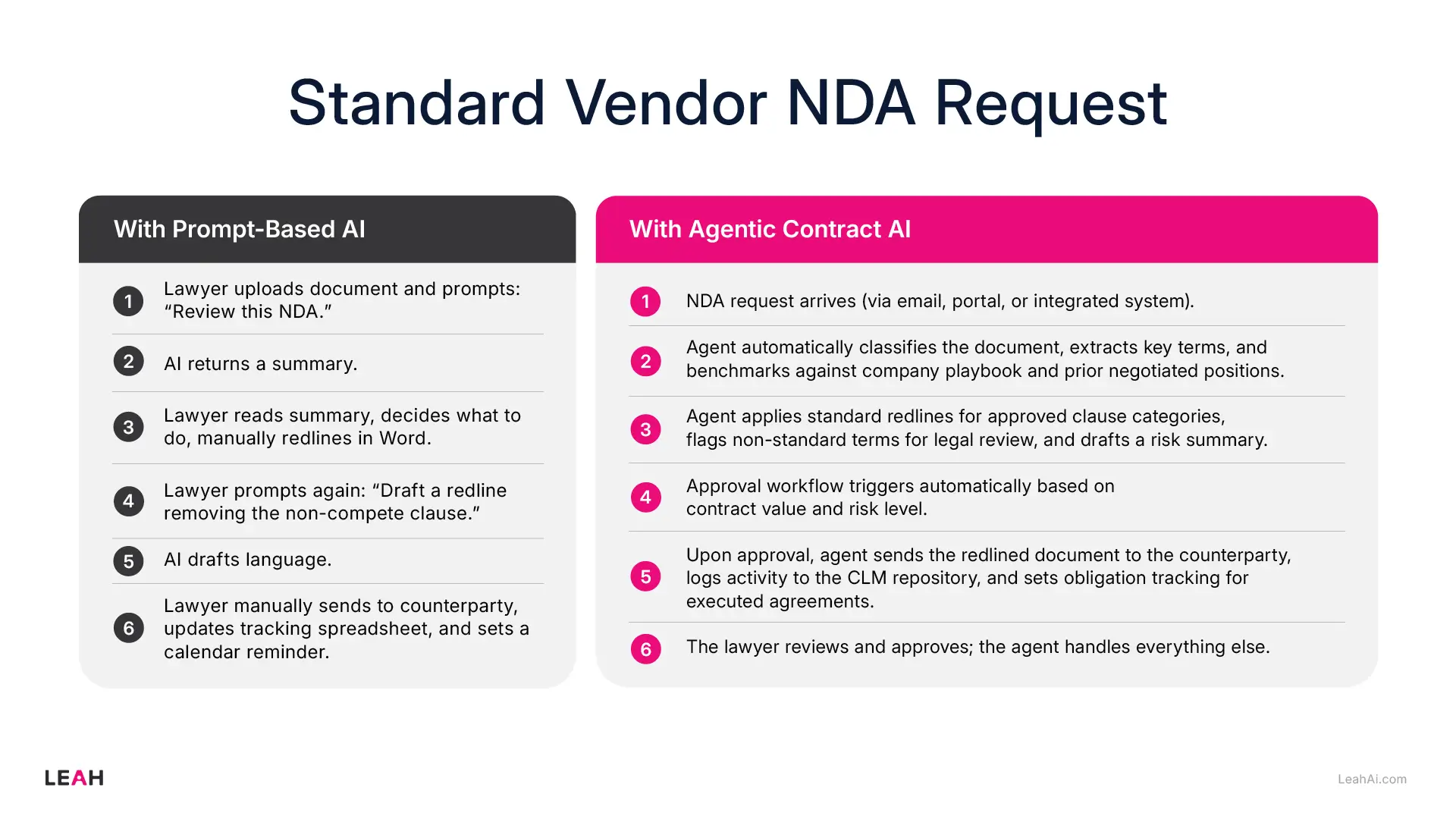

A practical example illustrates the difference clearly. Consider a standard vendor NDA request:

With prompt-based AI:

- Lawyer uploads document and prompts: "Review this NDA."

- AI returns a summary.

- Lawyer reads summary, decides what to do, manually redlines in Word.

- Lawyer prompts again: "Draft a redline removing the non-compete clause."

- AI drafts language.

- Lawyer manually sends to counterparty, updates tracking spreadsheet, and sets a calendar reminder.

With agentic contract AI:

- NDA request arrives (via email, portal, or integrated system).

- Agent automatically classifies the document, extracts key terms, and benchmarks against company playbook and prior negotiated positions.

- Agent applies standard redlines for approved clause categories, flags non-standard terms for legal review, and drafts a risk summary.

- Approval workflow triggers automatically based on contract value and risk level.

- Upon approval, agent sends the redlined document to the counterparty, logs activity to the CLM repository, and sets obligation tracking for executed agreements.

The lawyer reviews and approves; the agent handles everything else.

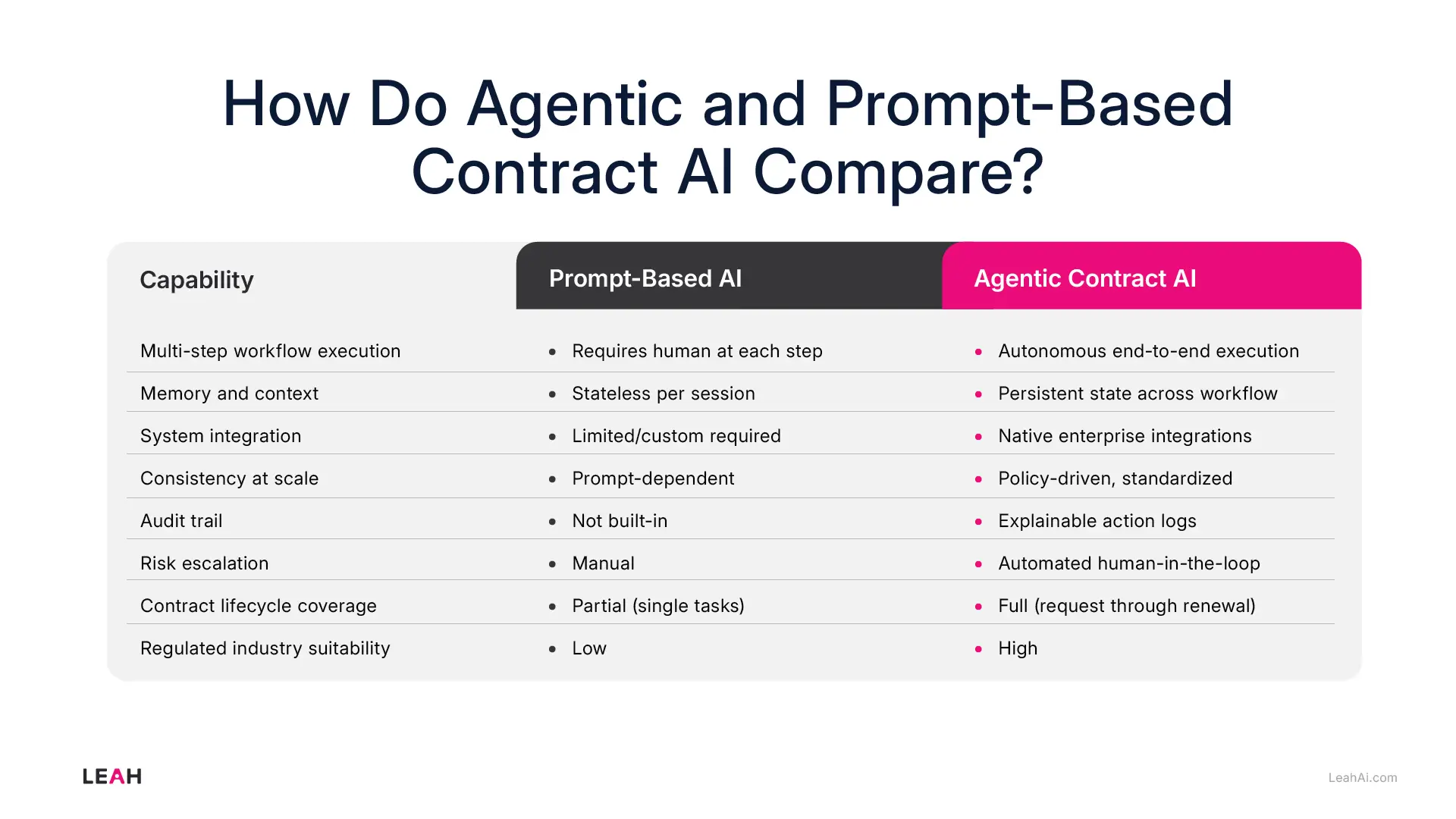

How Do Agentic and Prompt-Based Contract AI Compare?

Why Does This Distinction Matter for Enterprise Contract Management?

Enterprise contract operations are not single-task problems. A mid-size company managing 2,000 active contracts annually faces:

- Multiple contract types (MSAs, SOWs, NDAs, procurement agreements, employment contracts)

- Multiple business units with different approval hierarchies

- Regulatory obligations that vary by geography and industry

- Counterparty negotiations involving iterative redlines over days or weeks

- Post-signature obligations: renewals, payment milestones, compliance reporting

No amount of prompt optimization makes a stateless AI assistant capable of managing this complexity reliably.

According to World Commerce & Contracting, and widely cited across the industry, poor contract management costs organizations an average of 9% of annual revenue. The majority of that leakage occurs not during drafting, but in the handoffs between stages — exactly where prompt-based AI provides no assistance.

Agentic AI was designed for handoffs. Its value is greatest precisely where human attention is most likely to fail: the gap between "contract reviewed" and "contract executed and tracked."

What Should Agentic CLM Actually Look Like in 2026?

Not every vendor describing their product as "agentic" has built a genuinely agentic system. The term has become marketing shorthand for any AI-enabled contract tool. Here is what genuine agentic CLM architecture requires.

1. Multi-Agent Orchestration, Not a Single Model

True agentic CLM deploys specialized agents that collaborate — a drafting agent, a risk analysis agent, an obligations tracking agent, and a workflow orchestration layer that coordinates them. This is meaningfully different from a single LLM with a longer prompt. These agents (and others like them) form the architecture underlying Leah's platform.

Specialized agents are more accurate in their domain, more explainable in their reasoning, and more maintainable as enterprise needs evolve. A system with genuine multi-agent architecture can delegate, escalate, and hand off between agents the way a well-run legal and procurement team does between human specialists.

2. Long-Horizon State Management

Contract management processes routinely span days, weeks, or months. An agentic system must maintain a durable state — remembering where a contract is in its lifecycle, what approvals have been obtained, what the counterparty has already conceded in negotiation — across sessions and across users.

This is technically demanding. It requires stateful execution frameworks, not just a chat interface with conversation history.

3. Human-in-the-Loop by Design, Not by Accident

The goal of agentic AI is not to remove humans from contract management — it is to ensure humans spend their time on decisions that genuinely require judgment, rather than administrative tasks that do not.

Well-designed agentic CLM systems define escalation policies in advance: which contract types require legal sign-off, which clause deviations trigger automatic review, which counterparty requests fall within pre-approved negotiation ranges. The agent operates autonomously within those boundaries and surfaces exceptions intelligently.

This is not a limitation of the technology — it is a feature. Enterprise risk management depends on predictable human oversight at predictable points.

4. Explainability and Audit Trails

In regulated industries — financial services, healthcare, pharmaceuticals, defense — every contract decision must be auditable. Why was this clause accepted? Who approved this deviation from the standard playbook? When was this obligation tracking record last updated?

Agentic systems built for enterprise environments produce reasoning logs for every automated decision. This is not optional for compliance purposes; it is a baseline requirement.

5. Enterprise System Integration

Contract management does not happen in isolation. Procurement contracts connect to ERP and supplier management platforms. Legal agreements connect to entity management and regulatory reporting systems. Employment contracts connect to HR and payroll.

An agentic CLM platform needs native integration across the enterprise stack — not theoretical API compatibility, but tested, production-grade connectors that make contract data available where it is needed without manual export and re-entry.

Is Prompt-Based AI Useless for Contract Work?

No. Prompt-based AI remains valuable for specific, bounded contract tasks — particularly in smaller organizations, for occasional users, or for tasks that genuinely are one-off queries rather than part of a recurring workflow.

A general counsel who needs a quick summary of an unusual clause before a board meeting does not need an agentic system. A startup founder reviewing their first investor term sheet can get significant value from a well-prompted LLM.

The question is fit for purpose:

- Prompt-based AI is best suited for: ad hoc analysis, one-off drafting assistance, legal research, explaining complex language, and supporting individual users who manage a small number of contracts.

- Agentic contract AI is best suited for: enterprise-scale CLM, high-volume contract operations, regulated industries requiring governance, cross-functional workflows spanning legal and procurement, and organizations where contract cycle time and risk management are strategic priorities.

The distinction becomes consequential when organizations attempt to scale prompt-based tools to solve enterprise problems.

How Does Agentic CLM Deliver Measurable Business Outcomes?

The shift from prompt-based to agentic contract AI is not incremental — the performance difference is categorical.

Organizations deploying genuine agentic CLM at enterprise scale report outcomes including:

- Contract cycle time reductions of 70–85%, compressing review-to-signature from weeks to days. Medical device manufacturer Terumo, for example, reduced contract processing from a typical 2–4 week cycle to 3 days after deploying Leah's agentic CLM platform.

- Significant reduction in non-standard clause acceptance, because agentic systems enforce playbook compliance consistently rather than relying on individual reviewer knowledge.

- Faster onboarding of new contract types, because agent policies can be updated without retraining lawyers on new procedures.

- Improved obligation tracking and renewal capture, because the agent monitors active contracts continuously rather than relying on calendar reminders.

According to Forrester's research on intelligent contract management, organizations with mature AI-driven CLM programs report contract value leakage reduction of 40–60% compared to organizations using manual or partially automated systems.

What Should You Ask When Evaluating Contract AI Vendors in 2026?

When assessing whether a contract AI solution is genuinely agentic or effectively prompt-based, ask these questions:

- Can the system execute a complete contract workflow — from request through signature — without human intervention at each step? Ask for a live demonstration, not a slide.

- How does the system maintain state across sessions? If a contract is paused mid-negotiation, what happens to the context when work resumes?

- What happens when the AI encounters a clause outside its approved parameters? Describe the escalation mechanism in detail.

- What is the audit trail structure? Can you produce a log showing why a specific automated decision was made?

- How does the system integrate with your existing ERP, CLM repository, and eSignature platforms? What is the implementation timeline for those integrations?

- How is the AI updated when your playbook or legal policies change? Who controls that process, and how long does it take?

- What does the human-in-the-loop framework look like? Where specifically does the system require human approval, and where does it act autonomously?

The answers to these questions will quickly reveal whether a vendor has built a genuinely agentic system or a well-packaged prompt interface.

Frequently Asked Questions

Can a prompt-based AI tool become agentic with enough customization?

In theory, yes — but in practice, the engineering investment required to build reliable workflow automation, state management, and system integration on top of a prompt-based tool is equivalent to building a purpose-built agentic system. Organizations that have attempted this typically end up with brittle, high-maintenance solutions that lack the governance and auditability that enterprise CLM requires. For most organizations, purpose-built agentic CLM is more reliable and faster to deploy.

Is agentic contract AI safe to use in regulated industries like financial services or healthcare?

Yes, provided the system is built with enterprise-grade security and compliance architecture. Key requirements include SOC 2 Type II certification, data residency controls, role-based access with full audit logging, on-premise or private cloud deployment options, and documented human-in-the-loop controls for high-risk decisions. Not all "agentic" contract AI products meet these requirements — due diligence on security architecture is essential.

How long does it take to deploy an agentic CLM system?

Deployment timelines vary significantly based on integration complexity, contract volume, and organizational readiness. A well-scoped enterprise deployment with standard integrations typically takes 8–16 weeks to reach production-ready status. Pilots covering a single contract type or business unit can be operational in 4–6 weeks. The bottleneck is usually policy definition — establishing what the agent is authorized to do autonomously — not technical implementation.

Does agentic contract AI replace contract lawyers?

No. Agentic contract AI handles the administrative, analytical, and coordination work that currently consumes a disproportionate share of legal time. It executes standard playbook reviews, routes approvals, tracks obligations, and flags anomalies. This frees lawyers to focus on complex negotiations, strategic risk assessment, and advising the business — the work that requires genuinely human judgment.

What is the difference between an agentic CLM platform and a CLM system with AI features?

A CLM system with AI features adds AI capabilities (summarization, clause extraction, risk scoring) to a workflow platform that is still fundamentally human-driven. Humans remain responsible for moving contracts between stages. An agentic CLM platform inverts this model: AI agents drive the workflow, and humans define boundaries and review exceptions. The difference is architectural, not incremental — and it determines whether AI reduces task effort or transforms contract operations.

How does agentic contract AI handle multi-party contracts?

Purpose-built agentic CLM systems support multi-party workflows including coordinated redline exchanges, parallel approval tracks, and consolidated obligation management across all counterparties. This is an area where agentic architecture provides particular value, since multi-party coordination is exactly the kind of high-stakes, error-prone coordination work that benefits most from automation.

What AI models power agentic contract AI?

Enterprise-grade agentic CLM platforms are typically model-agnostic, drawing on multiple large language models (from providers including OpenAI, Anthropic, Google, and Microsoft Azure) and combining them with proprietary, domain-trained models optimized for contract language and legal concepts. The orchestration layer — which coordinates agents, manages state, and enforces governance policies — is purpose-built rather than off-the-shelf. This architecture avoids vendor lock-in to any single AI provider and allows the system to use the best available model for each specific task.